Art2Real: Translating Artworks to Photo-Realistic Images

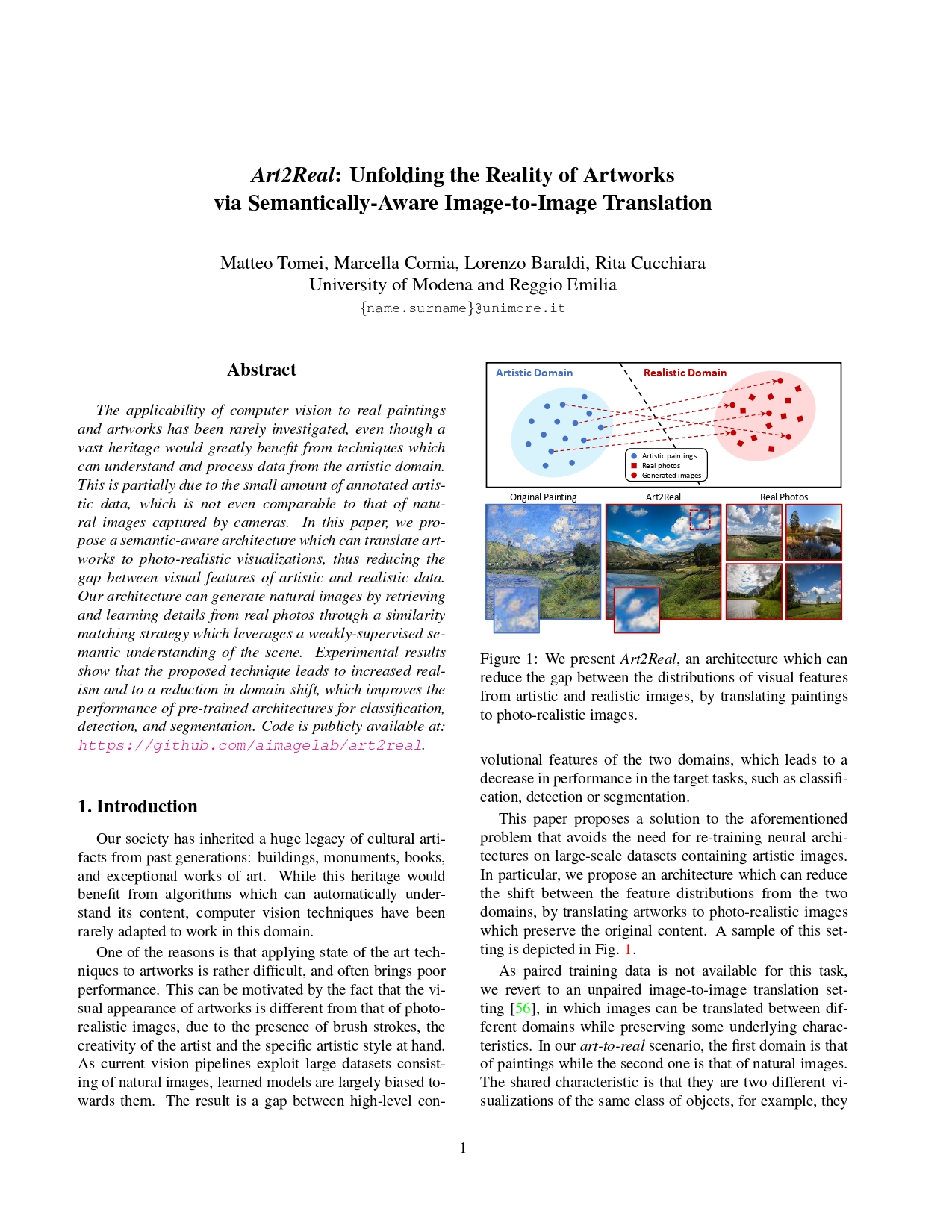

Deep Learning models are usually trained on images captured from the world as it is. This brings to an incompatibility between such methods and digital data from the artistic domain, on which current techniques under-perform. A possible solution is to reduce the domain shift at the pixel level, thus translating artistic images to realistic copies.

We developed a model capable of translating paintings to photo-realistic images, trained without paired examples. The idea is to enforce a patch level similarity between real and generated images, aiming to reproduce photo-realistic details from a memory bank of real images. This is subsequently adopted in the context of an unpaired image-to-image translation framework, mapping each image from one distribution to a new one belonging to the other distribution.

Paper

Art2Real: Unfolding the Reality of Artworks via Semantically-Aware Image-to-Image Translation

M. Tomei, M.Cornia, L.Baraldi, R.Cucchiara

CVPR 2019

Publications

| 1 |

Tomei, Matteo; Cornia, Marcella; Baraldi, Lorenzo; Cucchiara, Rita

"Image-to-Image Translation to Unfold the Reality of Artworks: an Empirical Analysis"

Image Analysis and Processing – ICIAP 2019,

Trento, Italy,

pp. 741

-752

,

9-13 September, 2019,

2019

| DOI: 10.1007/978-3-030-30645-8_67

Conference

|

| 2 |

Tomei, Matteo; Baraldi, Lorenzo; Cornia, Marcella; Cucchiara, Rita

"What was Monet seeing while painting? Translating artworks to photo-realistic images"

Computer Vision – ECCV 2018 Workshops,

Munich, Germany,

8-14 September 2018,

2019

| DOI: 10.1007/978-3-030-11012-3_46

Conference

|

| 3 |

Tomei, Matteo; Cornia, Marcella; Baraldi, Lorenzo; Cucchiara, Rita

"Art2Real: Unfolding the Reality of Artworks via Semantically-Aware Image-to-Image Translation"

2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition,

vol. 2019-,

Long Beach, CA, USA,

pp. 5842

-5852

,

June 16-20 2019,

2019

| DOI: 10.1109/CVPR.2019.00600

Conference

|