Research on New Visions: Sensors, Mobile, and Embedding

Egocentric Vision for Detecting Social Relationships

Social interactions are so natural that we rarely stop wondering who is interacting with whom or which people are gathering into a group and who are not. Nevertheless, humans naturally do that neglecting that the complexity of this task increases when only visual cues are available. Different situations need different behaviors: while we accept to stand in close proximity to strangers when we attend some kind of public event, we would feel uncomfortable in having people we do not know close to us when we have a coffee. In fact, we rarely exchange mutual gaze with people we are not interacting with, an important clue when trying to discern different social clusters.

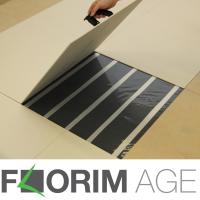

Sensing floors

Surveillance systems can really benefit from the integration of multiple and heterogeneous sensors. In this paper we describe an innovative sensing floor. Thanks to its low cost and ease of installation, the floor is suitable for both private and public environments, from narrow zones to wide areas. The floor is obtained adding a sensing layer below commercial floating tiles, generating a sensor scalable, reliable, and completely invisible to the users. The temporal and spatial resolutions of the sensor data are high enough to identify the presence of people, to recognize their behavior and to detect events in a privacy compliant way.

Real Time Ellipse Detection on Mobile Devices

Research activity in the development of a novel ellipse detection method which is able to run in real time on mobile devices. This method provides a major speedup with regard to other state-of-the-art approaches, while achieving comparable (or even better) detection accuracy.

Collaborative robot programming

Unlike conventional robots which require costly caging, specialized programming and extensive integration to execute a single task, a collaborative robot such as Baxter® can be quickly and easily trained and deployed as often as needed. The robot has been developed to direct collaborate with humans, learning from and interacting with them.

The research activity is carryed out in collaboration with the La.P.I.S. Robofacturing Design Lab.